AI may sound human...but whose values does it reflect?

Fri, 6th Mar 2026

When a large language model appears "human-like," whose values is it actually reflecting?

A study conducted by a team at Harvard University, including cultural psychology academic Mohammad Atari, who spoke with TechDay, suggests the answer may be more specific than many assume.

Rather than reflecting a global cross-section of humanity, which comprises an indeterminable number of cultures, LLMs tend to align most closely with the values of Western societies.

The research, titled "Which Humans?", analyses how an AI model responded to questions from the World Values Survey, one of the most widely used international datasets on cultural and political attitudes.

The results suggest that modern AI systems occupy a cultural space close to that of Western democracies, raising important implications for countries deploying these tools across public services and institutions.

The researchers approached the problem in an unusual way: they treated the AI system as another country in the global dataset.

Using the World Values Survey, which measures attitudes toward issues such as democracy, equality, immigration, religion, and trust, the team asked the model the same questions posed to human respondents in dozens of countries.

The survey iteration, with data collected from mid-2017 to early-2022, asks personal questions of varying complexity, from "How important is leisure time?" to "What do you think should international organisations prioritise, being effective or being democratic?"

Atari, then a postdoctoral fellow at Harvard University, now an Assistant Professor at the University of Massachusetts Amherst, compared the AI model's answers to national averages of 94,278 individuals from 65 nations.

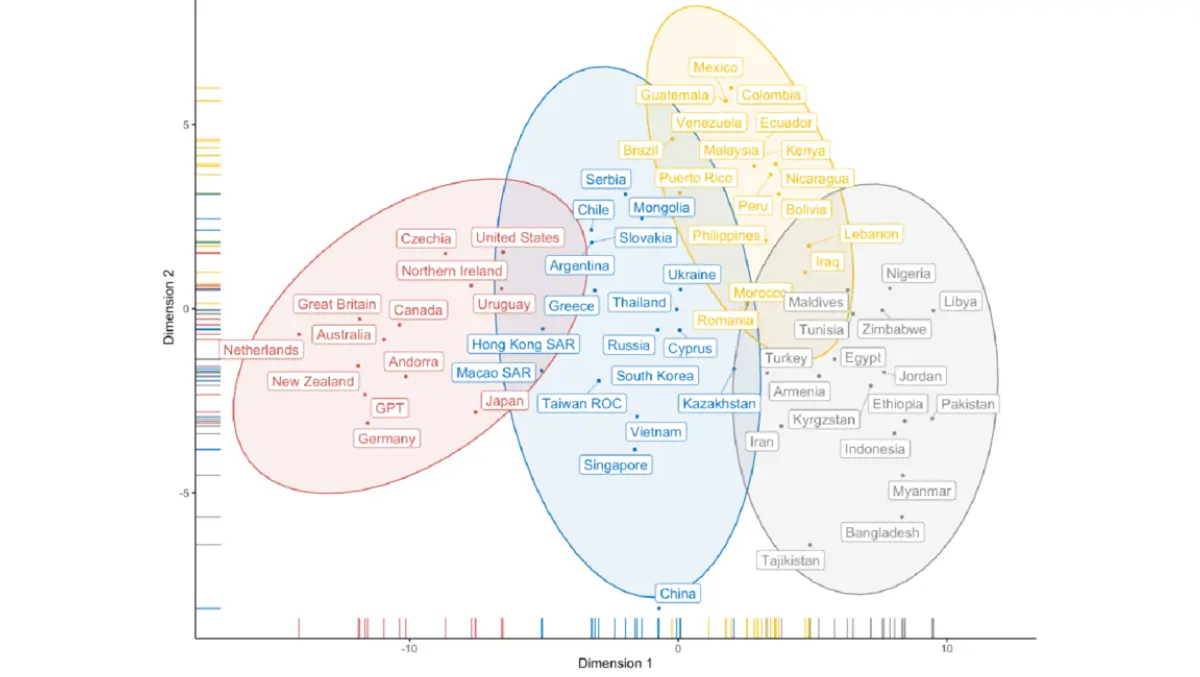

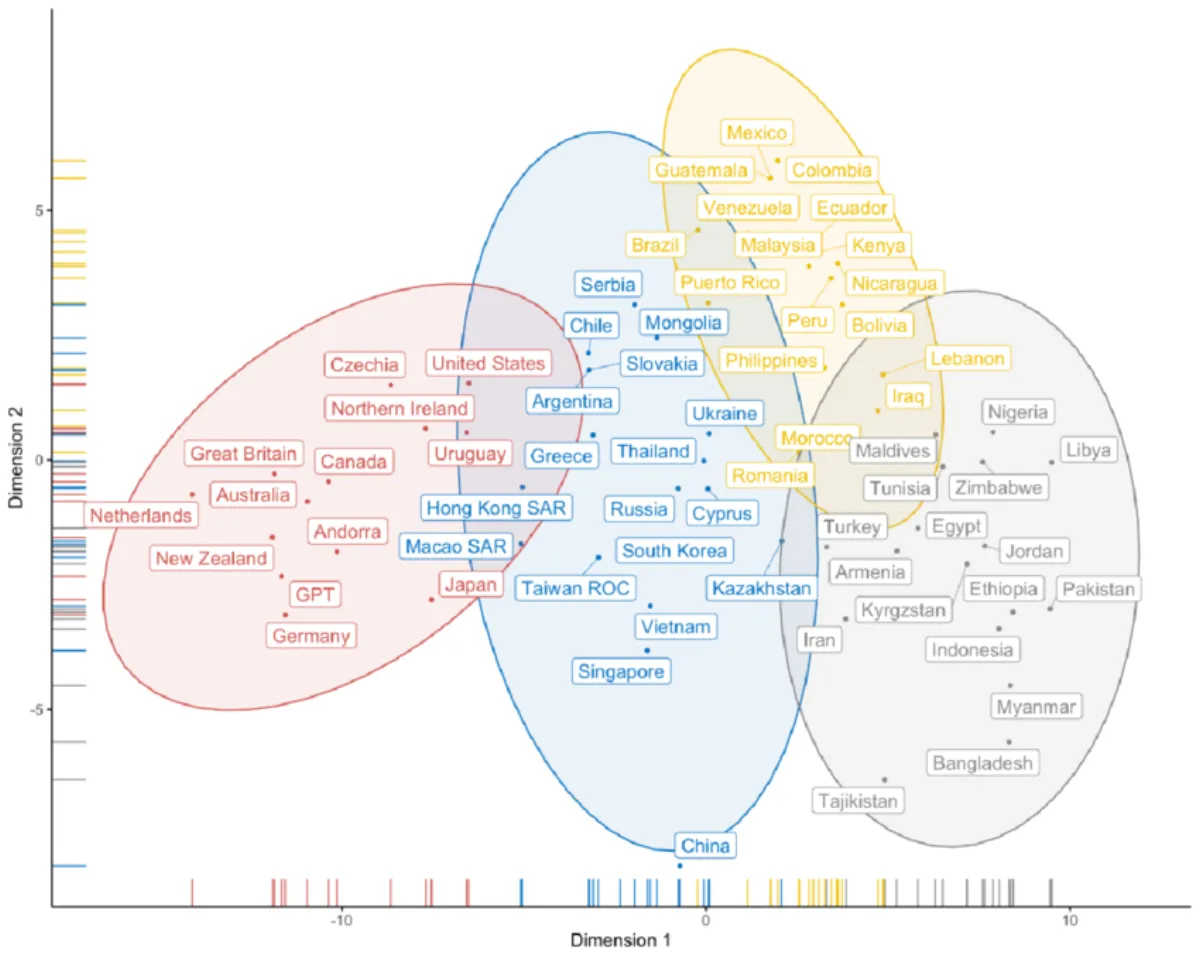

The result was a global map in which countries cluster according to shared values. Western democracies tend to group together, while other regions (including parts of Asia, Latin America and the Middle East) form distinct clusters.

"Its a dimension reduction analysis in which reducing a lot of cultural variables like morality, ethics, politics, well being and so on. You're projecting a lot of data onto two dimensions and using this analysis, we can also detect communities of countries that go together," said Atari.

This figure, published with the research, shows the overlap in values among countries included within the data. ChatGPT is represented as a country for perspective.

The AI model landed squarely within the Western group (red cluster). The blue cluster represents more Asian societies, the yellow cluster includes many Latin American countries, and the grey cluster comprises many Muslim-majority societies, some Middle Eastern and some African countries.

ChatGPT's responses were statistically closest to those of Germany, Britain, Australia, and New Zealand.

ChatGPT's responses were statistically closest to those of Germany, Britain, Australia, and New Zealand.

For Western observers, the finding may feel unsurprising. Much of the data used to train modern language models originates from English-language internet sources and Western media ecosystems.

But the implications extend beyond training data.

The "WEIRD" bias in AI

The study reflects a longstanding concept in psychology known as the "WEIRD" problem - the tendency for research participants to come disproportionately from societies that are Western, Educated, Industrialised, Rich and Democratic.

For decades, psychologists have warned that conclusions drawn from WEIRD populations may not generalise globally.

Joseph Henrich, a professor at Harvard in the department of Evolutionary Biology and the senior author of this study, published research analysing WEIRD in 2010. It stated, "these empirical patterns suggest that we need to be less cavalier in addressing questions of human nature on the basis of data drawn from this particularly thin, and rather unusual, slice of humanity."

The same Western pattern appears to be emerging in artificial intelligence.

"We realised that the psychology of GPT might also be WEIRD and quite different from much of the human diversity around the globe," Atari said.

For countries like Canada or the United States, that alignment may feel relatively natural. Canadian political culture broadly overlaps with the liberal democratic norms common in Western Europe and other Anglosphere nations.

But the situation becomes more complicated when these systems are deployed globally.

Language alone doesn't explain the bias

One obvious explanation for the Western alignment is language. Many LLMs operate primarily in English, which could naturally bias their responses toward English-speaking cultures.

But Atari's team tested that hypothesis.

In follow-up experiments, the researchers repeated their analysis using newer models and translated the survey questions into Spanish. The results remained largely unchanged.

Atart said that suggests the cultural alignment of the models reflects deeper structural factors, including the composition of training data and the human feedback used during model alignment.

AI's uneven understanding of cultures

More recent research, now underway at the University of Massachusetts Amherst's Culture and Morality Lab, explores how well AI systems understand the moral values of different countries.

Early results suggest a similar pattern: the models perform relatively well when predicting the values of Western societies, but struggle when estimating values in non-Western regions. In some cases, they systematically underestimate the importance of particular values in those countries.

"In some cases, they overestimate the amount of care and equality that Western societies have, but they are much less accurate for less WEIRD societies or for non western societies," said Atari. "If you ask LLMs about the moral values of the U.S. or Belgium or Germany, they're going to get them mostly right. They might even overestimate the morality of these countries. But you if you ask these models about the moral values of Egypt and Turkey, they are going to get the moral norms quite wrong and in systematic ways."

Precision in how we talk about AI

One of Atari's key takeaways is linguistic rather than technical.

Researchers and industry leaders often describe AI behaviour as "human-like," but that phrasing can obscure important cultural differences.

Instead, Atari argues for more precise comparisons.

Rather than saying an AI system reflects "human values," it may be more accurate to say it resembles the values of particular populations, such as Western liberal democracies.

After all, if artificial intelligence is beginning to shape information, decisions, and public discourse, understanding whose values it mirrors may matter just as much as how intelligent it appears.

"With other countries...be aware of the cultural bias when you are making generalisations and be allergic to the term human. not drawing comparisons between AI and humans as a monolithic category...those are the things that would be great to talk about, especially in industry, as people do more AI work," said Atari.